Please sign up for our mailing list to receive updates on IAIFI events.

You can watch our Past Colloquia recordings on YouTube.

Have suggestions for future speakers to invite? Fill out the form here!

Upcoming Colloquia

Past Colloquia

Spring 2026

- Yury Polyanskiy, Professor, MIT

- Friday, May 8, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Quantization for LLMs and matrix multiplication

- Modern LLMs store information in high-dimensional vectors rather inefficiently. For example, a sequence of tokens (18-bit integers) is mapped to 2-5k dimensional vectors stored as 16-bit floats — roughly a 18 → 100,000 bit expansion per token. Given this redundancy, it is unsurprising that LLMs tolerate substantial reductions in the precision of their basic operations (matrix multiplication) without catastrophic loss. A voluminous literature has emerged on this topic over the past five years. Despite significant algorithmic progress, however, our understanding of the fundamental limits — lower bounds — remains nascent.

In this talk I will present our initial results on the information-theoretic tradeoffs arising in quantized matrix multiplication. I will also show how information-theoretic insights enable the design of more efficient quantization algorithms for real-world LLMs, achieving state-of-the-art performance at 2–4 bits per parameter (NestQuant, WaterSIC). - YouTube Recording

- James Requeima, Research Scientist, Google DeepMind

- Friday, April 24, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- LLM Processes: Incorporating Expert Priors into Scientific Modeling via Natural Language

- Scientists and machine learning practitioners often struggle to formally integrate prior knowledge into predictive models, with probabilistic modeling expertise typically limited to specialists. In this talk, we present a regression model that processes numerical data and makes probabilistic predictions guided by natural language descriptions of prior knowledge. Large Language Models (LLMs) provide an ideal foundation for this tool, allowing experts to incorporate their domain-specific understanding and empirical insights through natural language, while leveraging the LLM’s latent problem-relevant knowledge. We explore strategies for eliciting explicit numerical predictive distributions from LLMs, which we call LLM Processes, and the rich hypothesis space implicitly encoded in LLMs.

- YouTube Recording | Talk Slides

- Tommaso Dorigo, First Researcher, INFN

- Friday, April 10, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Co-Deslgn: the Second AI Revolution in Fundamental Science

- The success of automatic methods for image classification in 2012 mark a phase transition in the performance of machine learning algorithms; those developments have led to a revolution in the way the extraction of information from complex data is operated in fundamental science experiments. A second AI-powered revolution is under way now, thanks to the development of more advanced, powerful algorithms and methods; its end goal is the assistance of humans in the optimal design of scientific experiments.

The large dimensionality of the parameter space describing apparata such as particle collider detectors, the stochasticity of the physical processes generating relevant data in those systems, and the complexity of the objective function of multi-target experiments can now be handled by hybrid optimization techniques, multi-modal systems, generative AI, and AI agents. In particular, the concept of co-design of hardware and software gains prominence for its crucial tackling of the misalignment between the design of hardware systems and the choice and tuning of software methods for inference extraction. In this presentation I will discuss the state of the art of research in this thriving subject. - YouTube Recording | Talk Slides

- Carlo V. Cannistraci, Chair Professor, Tsinghua Laboratory of Brain and Intelligence (THBI)

- Friday, March 13, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Brain-inspired sparse network science for next generation efficient and sustainable AI

- Artificial neural networks (ANNs) are foundational to contemporary artificial intelligence (AI), however their conventional fully connected architectures are computationally inefficient. Contemporary large language models consume vast amounts of power at rates over 100 times that of the human brain. In stark contrast, the brain’s inherently sparse connectivity facilitates exceptional capabilities with minimal expenditure: learning with just a few watts.

Brain-inspired network science research can play a relevant role in designing low-consumption and efficient deep learning. We need to develop concepts and theories for an ecological and sustainable approach to AI. Some of these new computing paradigms can be inspired from the physics of the brain network architecture and its complex systems biology.

At the Center for Complex Network Intelligence (CCNI) within the Tsinghua Laboratory of Brain and Intelligence (THBI), our research focuses on three pivotal features of brain networks that contribute to efficient computation: (1) Connectivity Sparsity: Implementing sparse connections to reduce computational overhead while maintaining performance; (2) Connectivity Morphology: Exploring the spatial patterns of neural connections to optimize information processing; (3) Neuro-Glia Coupling: Investigating the interactions between neurons and glial cells to enhance computational efficiency.

This talk will introduce the Cannistraci-Hebb Training soft rule (CHTs), a brain-inspired network science theory that employs a gradient-free approach, relying solely on network topology to predict sparse connectivity during dynamic sparse training. CHTs have demonstrated the potential to achieve ultra-sparse networks with approximately 1% connectivity, outperforming fully connected networks in various tasks.

Additionally, we will discuss our recent study on the relationship between sparse morphological connectivity and spatiotemporal intelligence. This research introduces neuromorphic dendritic network computation with silent synapses, a model that emulates visual motion perception by integrating synaptic organization with dendritic tree-like morphology. The model exhibits exceptional performance in visual motion perception tasks, underscoring the potential of bio-inspired approaches to enhance the transparency and efficiency of modern AI systems. - YouTube Recording | Talk Slides

- Andrew Gordon Wilson, Professor, NYU

- Friday, February 27, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence

- Can we learn more from data than existed in the generating process itself? Can new and useful information be constructed from merely applying deterministic transformations to existing data? Can the learnable content in data be evaluated without considering a downstream task? On these questions, Shannon information and Kolmogorov complexity come up nearly empty-handed, in part because they assume observers with unlimited computational capacity and fail to target the useful information content. In this talk we identify and exemplify three seeming paradoxes in information theory: (1) information cannot be increased by deterministic transformations; (2) information is independent of the order of data; (3) likelihood modeling is merely distribution matching. To shed light on the tension between these results and modern practice, and to quantify the value of data, we introduce epiplexity, a formalization of information capturing what computationally bounded observers can learn from data. Epiplexity captures the structural content in data while excluding time-bounded entropy, the random unpredictable content exemplified by pseudorandom number generators and chaotic dynamical systems. With these concepts, we demonstrate how information can be created with computation, how it depends on the ordering of the data, and how likelihood modeling can produce more complex programs than present in the data generating process itself. We also present practical procedures to estimate epiplexity which we show capture differences across data sources, track with downstream performance, and highlight dataset interventions that improve out-of-distribution generalization. In contrast to principles of model selection, epiplexity provides a theoretical foundation for data selection, guiding how to select, generate, or transform data for learning systems.

- YouTube Recording | Talk Slides

- Roger Melko, Professor, University of Waterloo

- Friday, February 13, 2026, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Language Models for Quantum Simulation

- In the last few years, generative models have demonstrated a striking ability to scale, driving the rapid rise of GPT-like large language models. Over the same period, experimental quantum devices have advanced rapidly, with the simulation of quantum phases and phase transitions emerging as a key application of today’s quantum hardware. The growing availability of projective measurements from quantum simulators opens the exciting possibility of training custom generative models directly on quantum data. In this talk, I will demonstrate how such models can uncover hidden structures in quantum states, infer emergent properties, and predict outcomes of future experiments. I speculate on how these and other AI tools might ultimately contribute to the challenge of scaling up quantum simulations, with the goal of discovering new physics in complex quantum many-body systems.

- YouTube Recording | Talk Slides

Fall 2025

- T. Konstantin Rusch, Assistant Professor, Max Planck Institute for Intelligent Systems

- Friday, November 21, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Symbiosis of Physics and Artificial Intelligence

-

This was a joint colloquium with MIT CSAIL.

Artificial Intelligence (AI) is transforming how we advance science and engineering, yet current approaches remain limited by computational inefficiencies, insufficient theoretical grounding, and a focus on commercial rather than scientific applications. To address these, we propose an interdisciplinary research agenda that bridges physics and AI along two complementary directions: physics for AI and AI for physics. In the former, we develop physics-inspired AI models that exploit structural principles from physical systems to design architectures that are more efficient, expressive, and mathematically tractable. We further enhance scalability of our proposed models through control-theoretic in-training compression methods that enable efficient training of large models. In the latter direction, we apply AI, including our physics-inspired architectures, to challenging problems in the physical sciences, such as gravitational-wave analysis and scientific computing. One highlighted contribution in this context is Message-Passing Monte Carlo (MPMC), the first machine-learning approach for generating low-discrepancy point sets, which combines geometric deep learning with discrepancy theory and achieves 4- to 25-fold performance improvements over prior methods in physics, scientific machine learning, and robotics applications. Together, these efforts highlight the potential of uniting physics and AI to achieve models that are both powerful and principled, paving the way for the next generation of scientific AI systems. - YouTube Recording | Talk Slides

- Melanie Weber, Assistant Professor of Applied Mathematics and of Computer Science, Harvard University

- Friday, November 14, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Feature Geometry guides Model Design in Deep Learning

- The geometry of learned features can provide crucial insights on model design in deep learning. In this talk, we discuss two recent lines of work that reveal how the evolution of learned feature geometry during training both informs and is informed by architecture choices. First, we explore how deep neural networks transform the input data manifold by tracking its evolving geometry through discrete approximations via geometric graphs that encode local similarity structure. Analyzing the graphs’ geometry reveals that as networks train, the models’ nonlinearities drive geometric transformations akin to a discrete Ricci flow. This perspective yields practical insights for early stopping and network depth selection informed by data geometry. The second line of work concerns learning under symmetry, including permutation symmetry in graphs or translation symmetry in images. Group-convolutional architectures can encode such structure as inductive biases, which can enhance model efficiency. However, with increased depth, conventional group convolutions can suffer from instabilities that manifest as loss of feature diversity. A notable example is oversmoothing in graph neural networks. We discuss unitary group convolutions, which provably stabilize feature evolution across layers, enabling the construction of deeper networks that are stable during training.

- YouTube Recording

- Andy Keller, Research Fellow, Harvard University Kempner Institute

- Friday, October 24, 2025, 1:00pm–2:00pm, Kempner Institute, Harvard SEC Room 6.242 (150 Western Ave, Boston)

- Flow Equivariance: Enforcing Time-Parameterized Symmetries in Sequence Models

- Data arrives at our senses (or sensors) as a continuous stream, smoothly transforming from one instant to the next. These smooth transformations can be viewed as continuous symmetries of the environment that we inhabit, defining equivalence relations between stimuli over time. In machine learning, neural network architectures that respect symmetries of their data are called equivariant and have provable benefits in terms of generalization ability and sample efficiency. To date, however, equivariance has been considered only for static transformations and feed-forward networks, limiting its applicability to sequence models, such as recurrent neural networks (RNNs), and corresponding time-parameterized sequence transformations. In this talk, I will describe how equivariant network theory may be extended to this regime of `flows’ – one-parameter Lie subgroups capturing natural transformations over time, such as visual motion. I will begin by showing that standard RNNs are generally not flow equivariant: their hidden states fail to transform in a geometrically structured manner for moving stimuli. I will then show how flow equivariance can be introduced, and demonstrate that these models significantly outperform their non-equivariant counterparts in terms of training speed, length generalization, and velocity generalization, on a variety of tasks from next step prediction, to sequence classification, and partially observed ‘world modeling’ in both 2D and 3D worlds. I will conclude with hints at how this framework also enables constructing sequence models with equivariance to space-time symmetries such as Lorentz transformations relevant to the physics community.

- YouTube Recording | Talk Slides

- Peter Lu, Assistant Professor, Tufts University

- Friday, October 10, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Scientific Machine Learning for Modeling and Understanding Complex Physical Systems

- Complex systems in nature—from climate to materials science—often exhibit strongly interacting, high-dimensional dynamics that are difficult to characterize, model, and understand. In the scientific community, there has been a growing interest in using modern machine learning (ML) tools to tackle these systems by identifying relevant physical features, accelerating expensive simulations, and solving difficult inverse problems. However, despite significant advances over the past decade, we are still learning how to effectively use ML in science and engineering. My work focuses on developing foundational ML methods for modeling and understanding complex physical systems from high-dimensional chaotic dynamics to many-body quantum systems. In this talk, I will introduce novel contrastive learning-based approaches for training physically consistent ML emulators and performing high-dimensional simulation-based inference. I will also discuss new ML methods for efficiently simulating interacting quantum systems. These advances demonstrate how we can use ML to solve scientific problems by combining tools from representation learning and generative modeling with theoretical insights from statistics, dynamical systems theory, and mathematical physics.

- YouTube Recording | Talk Slides

- Berthy Feng, Postdoctoral Fellow, IAIFI Fellow

- Friday, September 26, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Advancing Scientific Computational Imaging through Data-driven and Physics-based Priors

- The core idea of computational imaging is to supplement limited observable data with human-imposed assumptions, or priors. However, incorporating priors in the imaging process poses computational challenges, including efficiently expressing sophisticated priors, appropriately balancing priors with observations, and gently enforcing physics constraints. My work addresses such challenges with principled methods for bringing informative assumptions into scientific computational imaging. In this talk, I will focus on black-hole imaging problems through the lens of both data-driven priors and physics-based priors.

On the data-driven side, I will present work on score-based priors, including a posterior-estimation method and results of re-imagining the famous M87 black hole from real data with score-based priors. On the physics-based side, I will show we have been able to tackle extremely under-determined imaging problems by enforcing physics constraints, including the problem of single-viewpoint dynamic tomography of emission near a black hole. Finally, I will address the intersection of AI and physics by presenting neural approximate mirror maps, a way to enforce physics constraints on generative models. - YouTube Recording | Talk Slides

- Francisco Villaescusa-Navarro, Research Scientist, Simons Foundation

- Friday, September 12, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- The Denario project: Deep knowledge AI agents for scientific discovery

- Artificial intelligence is rapidly changing the way we perform scientific research. In this talk, I will illustrate how AI agents can assist in a wide range of scientific tasks — from hypothesis generation and data exploration to code development and paper writing. I will present a variety of agents that we have designed for different purposes, including the CAMELS agents, CMBAgent, and CLAPP, highlighting how each of them tackles different challenges. To illustrate the potential of AI agents, I will try to write several scientific papers live, covering different areas of research and showcasing the versatility of these tools. Next, I will show some of the papers generated by Denario (our in-house AI research assistant), covering many research areas such as astrophysics, biology, biophysics, chemistry, medicine, planetary physics, mathematical physics, and neuroscience. Beyond the technical aspects, I will also discuss the broader implications: what these advances mean for the practice of science, how they may reshape the workflow of researchers, and the new skills we may need to foster in the next generation of scientists. Finally, I will invite an open discussion about both the opportunities and risks of deploying AI agents in scientific contexts, with the goal of reflecting collectively on how these technologies might transform the future of research.

- YouTube Recording | Talk Slides

Spring 2025

- Joshua Bloom, Professor of Astronomy, University of California Berkeley

- Friday, May 9, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- AI Accelerating Inquiry and Insight in Astrophysics

- The astrophysical data deluge has swamped traditional workflows, fostering a growing reliance on AI/ML tooling. Yet despite successes in post-data collection analysis with AI/ML, we are only beginning to realize its full potential across the broader scientific endeavor. From real-time control, to survey optimization, there is tremendous potential for AI/ML to improve the upstream efficacy of data collection. Likewise, downstream from data are exciting opportunities for AI-enabled hypothesis generation and human-centered exploration. I will ground this talk with specific examples of ongoing work in simulation based inference, self-supervised multi-modal foundation models (AstroM³), Rubin/LSST active optics, and neural compression (AstroCompress).

- YouTube Recording | Talk Slides

- J. Nathan Kutz, Robert Bolles and Yasuko Endo Professor of Applied Mathematics and Electrical and Computer Engineering, University of Washington

- Friday, April 25, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Learning Physics from Videos

- Sensing is a universal task in science and engineering. Downstream tasks from sensing include learning dynamical models, inferring full state estimates of a system (system identification), control decisions, and forecasting. These tasks are exceptionally challenging to achieve with limited sensors, noisy measurements, and corrupt or missing data. Existing techniques typically use current (static) sensor measurements to perform such tasks and require principled sensor placement or an abundance of randomly placed sensors. In contrast, we propose a SHallow REcurrent Decoder (SHRED) neural network structure which incorporates (i) a recurrent neural network (LSTM) to learn a latent representation of the temporal dynamics of the sensors, and (ii) a shallow decoder that learns a mapping between this latent representation and the high-dimensional state space. By explicitly accounting for the time-history, or trajectory, of the sensor measurements, SHRED enables accurate reconstructions with far fewer sensors, outperforms existing techniques when more measurements are available, and is agnostic towards sensor placement. In addition, a compressed representation of the high-dimensional state is directly obtained from sensor measurements, which provides an on-the-fly compression for modeling physical and engineering systems. Forecasting is also achieved from the sensor time-series data alone, producing an efficient paradigm for predicting temporal evolution with an exceptionally limited number of sensors. In the example cases explored, including turbulent flows, complex spatio-temporal dynamics can be characterized with exceedingly limited sensors that can be randomly placed with minimal loss of performance.

- YouTube Recording | Talk Slides

- Akshunna Dogra, Incoming IAIFI Fellow, PhD Student, Imperial College London

- Friday, April 11, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Many-fold Learning

- Iterative optimization techniques - ranging from the nonconvex, nonlinear gradient methods central to machine learning (ML) to simple regression fits over small datasets - have been instrumental in solving a wide variety of problems since the advent of modern computing. However, beyond special cases of linear architectures and convexified settings, a unified framework for describing optimization dynamics remains in its early stages, especially in the complex, high-dimensional regimes characteristic of ML. In this talk, we explore the foundations of a general perspective on optimization dynamics, proposing a unified framework for gradient-based methods. We view optimization as a dynamic process over model sets, shaped solely by the problem of interest, the chosen architecture and their interplay, generalizing a large class of seemingly diverse theoretical and empirical insights into a coherent whole. Finally, we discuss the computational advantages of this unified approach and the limitations of applying a ‘one-size-fits-all’ methodology to diverse optimization landscapes.

- YouTube Recording | Talk Slides

- Cengiz Pehlevan, Kempner Institute/Harvard University; Dmitry Krotov, MIT-IBM Watson AI Lab/IBM Research; Ila Fiete, McGovern Institute/MIT

- Friday, March 14, 2025, 3:00pm–4:15pm, MIT Schwarzman College of Computing (MIT 45-102)

- Research Panel on the 2024 Nobel Prize in Physics

- The 2024 Nobel Prize in Physics recognized John Hopfield and Geoffrey Hinton for their development of foundational and physics-inspired machine learning frameworks (the Hopfield network and the Boltzmann machine, respectively). Following on a fireside chat discussing the historical significance of the award in Fall 2024, IAIFI is hosting a panel discussion that will dive deeper into the impact of Hopfield and Hinton’s work, and more broadly of physics insights, on interdisciplinary research tackling the understanding of artificial and biological intelligent systems. Submit questions for the panel at any time.

- YouTube Recording | Talk Slides

- Daniel Whiteson, Professor of Physics and Astronomy, UC Irvine

- Friday, February 28, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Learning to find weird particles

- Finding the tracks that particles make through detectors is a critical component of identifying new physics and phenomena, but is very a challenging combinatorial problem. Traditionally, track finding codes assume that tracks must be helical, which simplifies the task but also restricts power to discover new physics which might produce non-helical tracks, effectively ignoring some potentially striking signatures. However, recent advances in ML-based tracking allow for new inroads into previously inaccessible territory, such as efficient reconstruction of tracks that do not follow helical trajectories. I will present a demonstration of training a network to reconstruct a particular type of non-helical tracks, quirks, and discuss the potential to generalize ML tracking to a wider class of non-helical tracks, enabling a search for overlooked anomalous tracks. I’ll end by talking briefly about my experience in science communication.

- YouTube Recording | Talk Slides

- Sam Bright-Thonney, Postdoctoral Fellow, IAIFI Fellow

- Friday, February 14, 2025, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Collide and compress: building robust embedding spaces to simplify new physics searches at the LHC

- Artificial intelligence has revolutionized the way we do physics at the Large Hadron Collider (LHC), and has found its way into nearly every stage of the process of turning raw detector measurements into scientific results. At present, most AI use cases remain highly application-specific, with models trained to isolate a narrow class of signals. The recent emergence of highly capable “foundation models” across multiple domains — coupled with the absence of clear phase space targets for discovering new physics at the LHC — motivates a shift in focus towards large, multipurpose models. In this talk we present recent work towards this end, unified by the idea of constructing physically meaningful embedding spaces for collider data. We highlight a particular application to anomaly detection at the LHC, discuss questions of robustness, and outline directions of ongoing and future work.

- YouTube Recording | Talk Slides

Fall 2024

- Yuan-Sen Ting, Associate Professor in Astronomy, Ohio State University

- Friday, November 22, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Expediting Astronomical Discovery with Large Language Models: Progress, Challenges, and Future Directions

- The expansive, interdisciplinary nature of astronomy, combined with its open-access culture, makes it an ideal testing ground for exploring how Large Language Models (LLMs) can accelerate scientific discovery. In this talk, we present our recent advances in applying LLMs to real-world astronomical challenges. Through self-play reinforcement learning, we demonstrate how LLM agents can conduct end-to-end research tasks in galaxy spectra fitting, encompassing data analysis, strategy refinement, and outlier detection—effectively mimicking human intuition and deep domain knowledge. Our agent, named Mephisto, successfully rediscovered and analyzed the Little Red Dots, an intriguing new class of galaxies recently identified by the James Webb Space Telescope. While autonomous research agents like Mephisto could theoretically help analyze all observed sources, the cost of closed-source solutions remains prohibitive for large-scale applications involving billions of objects. To address this limitation, our team at AstroMLab is developing lightweight, open-source specialized models and evaluating them against carefully curated astronomical benchmarks. Our research shows that specialized 8B-parameter LLMs can match GPT-4’s performance on specific tasks when properly pretrained and fine-tuned. Despite ongoing challenges, we see immense potential in scaling up automated astronomical inference, which could transform how astronomical research is conducted and accelerate our understanding of the Universe.

- YouTube Recording | Talk Slides

- George Barbastathis, Professor, MIT; Di Luo, Assistant Professor, UCLA; Alex Atanasov, Postdoc, Harvard; Hidenori Tanaka, Group Leader, CBS-NTT Program in Physics of Intelligence, Harvard University

- Friday, November 1, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- IAIFI Fireside Chat on 2024 Physics Nobel Prize

- Given the recent Nobel Prize in Physics, awarded to John Hopfield and Geoffrey Hinton for their development of foundational and physics-inspired machine learning frameworks (the Hopfield network and the Bolzmann machine, respectively), IAIFI is excited to host a discussion explaining the research underlying the award and its widespread impact. The discussion will include historical context and current developments in using physics for AI innovation. Experts at the intersection of physics, neuroscience, and computer science will share their perspectives on the research behind this year’s Nobel Prize in Physics and the next great discoveries to come from similarly interdisciplinary, curiosity-driven research.

- YouTube Recording | Talk Slides

- Fernanda Viégas, Professor of Computer Science, Harvard University; and Martin Wattenberg, Professor of Computer Science, Harvard University

- Friday, October 25, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- From Victorian trains to chatbots, via high-dimensional geometry

- Is language all you need to interact with AI, or is there room for other interfaces? Our talk will explore how we might help people work with AI effectively, safely, and enjoyably. Taking inspiration from the role of transparency in Victorian railways, we dive into the internal geometry of deep neural networks. The goal is a new kind of AI instrumentation: a dashboard for large language models. We argue that if toasters and coffeemakers have displays that tell you their internal state, we should expect nothing less from a machine learning model with a trillion parameters.

- YouTube Recording | Talk Slides

- Thomas Harvey, Postdoctoral Fellow, IAIFI

- Friday, October 11, 2024, 2:00pm–3:00pm, MIT Cosman Room (6C-442)

- Navigating the String Landscape with Machine Learning Techniques

- String theory is a framework for quantum gravity that seemingly encompasses all necessary features to describe our universe. The study of this theory has yielded significant insights in various domains of physics and mathematics, such as the quantum nature of black holes and the discovery of mirror symmetry. Despite these successes, due to the vast array of possible initial conditions, it remains unclear if we live somewhere in the “string landscape”. In this talk, we present efforts to leverage Reinforcement Learning to navigate this landscape and geometrically engineer quasi-realistic models of particle physics. Furthermore, we explore how recent advances in applying neural networks to numerical geometry have enabled the calculation of previously inaccessible properties of the low-energy theory, particularly Yukawa couplings and quark masses.

- YouTube Recording | Talk Slides

- Katie Bouman, Assistant Professor of Computing and Mathematical Sciences (CMS), Caltech

- Monday, September 16, 2024, 4:00pm–5:00pm, MIT Kolker Room (26-414)

- Seeing Beyond the Blur: Imaging Black Holes with Increasingly Strong Assumptions

- At the heart of our Milky Way galaxy lies a supermassive black hole called Sagittarius A* that is evolving on the timescale of mere minutes. This talk will present the methods and procedures used to produce the first images of Sagittarius A* as well as discuss future directions we are taking to leverage machine learning to sharpen our view of the black hole, including mapping its evolving environment in 3D. It has been theorized for decades that a black hole will leave a ‘shadow’ on a background of hot gas. However, due to its small size, traditional imaging approaches require an Earth-sized radio telescope. In this talk, I discuss techniques we have developed to photograph a black hole using the Event Horizon Telescope, a network of telescopes scattered across the globe. Recovering an image from this data requires solving an ill-posed inverse problem which necessitates the use of image priors to reduce the space of possible solutions. Although we have learned a lot from these initial images already, remaining scientific questions motivate us to improve this computational telescope to see black hole phenomena still invisible to us. In particular, we will discuss approaches we have developed to incorporate data-driven diffusion model priors into the imaging process to sharpen our view of the black hole and understand the sensitivity of the image to different underlying assumptions. Additionally, we will discuss how we have developed techniques that allow us to extract the evolving structure of our own Milky Way’s black hole over the course of a night. In particular, we introduce Orbital Black Hole Tomography, which integrates known physics with a neural representation to map evolving flaring emission around the black hole in 3D for the first time.

- YouTube Recording | Talk Slides

- François Charton, Research Engineer, Meta

- Friday, September 13, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Transformers meet Lyapunov. Solving a long-standing open problem in mathematics

- A condition for the global stability of a dynamical system is the existence of an entropy-like Lyapunov function. Unfortunately, no systematic method for discovering such functions is known, except in very special cases. Transformers can indeed be trained to discover new Lyapunov functions. I discuss the our results, with a particular focus on the specific issues of training language models on open or hard problems.

- YouTube Recording | Talk Slides

Spring 2024

- Jennifer Ngadiuba, Associate Scientist, Fermi National Accelerator Laboratory

- Friday, April 12, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Accelerating new physics discoveries at the LHC with anomaly detection

- The Large Hadron Collider (LHC) is the world’s largest and most powerful particle accelerator, located at CERN. It has been instrumental in numerous groundbreaking discoveries, including the Higgs boson. Despite these successes, vast portions of potential new physics remain unexplored due to the complexity and sheer volume of data generated by the LHC’s experiments. Traditional methods for analyzing these data often rely on pre-defined models, potentially overlooking novel phenomena that do not fit into existing theoretical frameworks. This talk presents innovative approaches to anomaly detection as a means to unearth new physics signatures from LHC data, accelerating the discovery of phenomena beyond the Standard Model.

- YouTube Recording | Talk Slides

- Soledad Villar, Assistant Professor, Johns Hopkins University

- Friday, March 22, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Exact and approximate symmetries in machine learning

- In this talk, we explain how we can use invariant theory tools to express machine learning models that preserve symmetries arising from physical law. We consider applications to self-supervised contrastive learning, and cosmology.

- YouTube Recording | Talk Slides

- Laurence Perrault-Levasseur, Assistant Professor, University of Montreal

- Friday, February 9, 2024, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Data-Driven Strong Gravitational Lensing Analysis in the Era of Large Sky Surveys

- Despite the remarkable success of the standard model of cosmology, the lambda CDM model, at predicting the observed structure of the universe over many scales, very little is known about the fundamental nature of its principal constituents: dark matter and dark energy. In the coming years, new surveys and telescopes will provide an opportunity to probe these unknown components. Strong gravitational lensing is emerging as one of the most promising probes of the nature of dark matter, as it can, in principle, measure its clustering properties on sub-galactic scales. The unprecedented volumes of data that will be produced by upcoming surveys like LSST, however, will render traditional analysis methods entirely impractical. In recent years, machine learning has been transforming many aspects of the computational methods we use in astrophysics and cosmology. I will share our recent work in developing machine learning tools for the analysis of strongly lensed systems.

- YouTube Recording | Talk Slides

Fall 2023

- Xiaoliang Qi, Professor of Physics, Stanford University

- Friday, December 8, 2023, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- A Phase Diagram of the Transformer at Initialization

- The growth in the complexity of training modern machine learning architectures is in part due to the large number of hyper-parameters which control their initialization and training. In this work, we study how key hyperparameters such as the activation function, the variance of the weight initialization distribution, and the strength of the residual connections affect the trainability of deep transformers. We find that randomly initialized transfomers exhibit four phases characterized by the vanishing or exploding gradients, and the presence or absence of rank collapse in the token matrix. The standard transformer initialization lies near the intersection of these four phases, balancing these effects. This framework explains the empirical success of modern transformer initialization, and also points to a subtle pathology in the attention mechanism which cannot perfectly balance these competing effects in an extensive phase.

- YouTube Recording

- François Lanusse, Researcher, CNRS

- Friday, October 13, 2023, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Merging Deep Learning with Physical Models for the Analysis of Cosmological Surveys

- As we move towards the next generation of cosmological surveys, our field is facing new and outstanding challenges at all levels of scientific analysis, from pixel-level data reduction to cosmological inference. As powerful as Deep Learning (DL) has proven to be in recent years, in most cases a DL approach alone proves to be insufficient to meet these challenges, and is typically plagued by issues including robustness to covariate shifts, interpretability, and proper uncertainty quantification, impeding their exploitation in scientific analyses. In this talk, I will instead advocate for a unified approach merging the robustness and interpretability of physical models, the proper uncertainty quantification provided by a Bayesian framework, and the inference methodologies and computational frameworks brought about by the Deep Learning revolution. In practice this will mean following two main directions: 1. using deep generative models as a practical way to manipulate implicit distributions (either data- or simulation-driven) within a larger Bayesian framework. 2. Developing automatically differentiable physics models amenable to gradient-based optimization and inference. I will illustrate these concepts in a range of applications in the context of cosmological galaxy surveys, from pixel-level astronomical data processing (e.g. deconvolution), to inferring cosmological parameters through fast and automatically differentiable cosmological N-body simulations. Methodology-wise these examples will involve in particular diffusion generative models, score-enhanced simulation-based inference, and hybrid physical/neural ODEs.

- YouTube Recording

- Hidenori Tanaka, Research Scientist, NTT Research, Harvard University

- Friday, September 15, 2023, 2:00pm–3:00pm, MIT Cosman Room (6c-442)

- Physics of Neural Phenomena: Understanding Learning and Computation through Symmetry

- Once described as alchemy, a quantitative science of machine learning is emerging. This talk will seek to unify the scientific approaches taken in machine learning, neuroscience, and physics. We will show how conceptual and mathematical tools in physics, such as symmetry, may illuminate the universal mechanisms behind learning and computation in biological and artificial neural networks. We plan to (i) generalize Noether’s theorem in physics to show how scale symmetry of normalization makes learning more stable and efficient, (ii) propose a new framework for studying compositional generalization in generative models, and (iii) identify phase transitions in the loss landscapes of self-supervised learning as a potential cause of a failure mode called dimensional collapse.

- YouTube Recording

Spring 2023

- Uros Seljak, Professor, UC Berkeley

- Friday, April 21, 2023, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Data science techniques for optimal cosmology analysis

- Cosmological surveys aim to map the universe and extract cosmological information from the data. Cosmological data contain a wealth of information on all scales, but its full extraction in the non-linear regime remains unsolved. I will discuss two different methods that enable optimal data analysis of cosmology data. In the first method we use generative Multi-scale Normalizing Flows to learn the high dimensional data likelihood of 2d data such as weak lensing. This method improves the figure of merit by a factor of 3 when compared to current state of the art discriminative learning methods. In the second method we use high dimensional Bayesian inference for optimal analysis of 3d galaxy surveys. I will describe recent developments of fast gradient based N-body simulations, and of fast gradient based MCMC samplers, which make optimal analysis of upcoming 3d galaxy surveys feasible. In particular, recently developed Microcanonical Langevin and Hamiltonian Monte Carlo samplers are often faster by an order magnitude or more than previous state of the art. Similar improvements are expected for other high dimensional sampling problems, such as lensing of cosmic microwave background, as well as in other fields such as Molecular Dynamics, Lattice QCD, Statistical Physics etc.

- YouTube Recording

- Yonatan Kahn, Assistant Professor, UIUC

- Friday, March 10, 2023, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Dumb Machine Learning for Physics

- Machine learning is now a part of physics for the foreseeable future, but many deep learning tools, architectures, and algorithms are imported from industry to physics with minimal modifications. Does physics really need all of these fancy techniques, or does “dumb” machine learning with the simplest possible neural network suffice? The answer may depend on the extent to which the training data relevant to physics problems is truly analogous to problems from industry such as image classification, which in turn depends on the topology and statistical structures of physics data. This talk will not endeavor to answer this very broad and difficult question, but will rather provide a set of illustrative examples inspired by a novice’s exploration of this rapidly-developing field.

- YouTube Recording | Talk Slides

- Boris Hanin, Assistant Professor, Princeton University

- Friday, February 10, 2023, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Bayesian Interpolation with Deep Linear Networks

- This talk is based on joint work (arXiv:2212.14457) with Alexander Zlokapa, which gives exact non-asymptotic formulas for Bayesian posteriors in deep linear networks. After providing some general motivation, I will focus on explaining results of two kinds. First, I will state a precise result showing that infinitely deep linear networks compute optimal posteriors starting from universal, data-agnostic priors. Second, I will explain how a novel scaling parameter – given by # data * depth / width – controls the effective depth and complexity of the posterior.

- YouTube Recording

Fall 2022

- Anima Anandkumar, Professor, Caltech & Director of ML, NVIDIA

- Tuesday, November 1, 2022, 11:00am–12:00pm, MIT Star Conference room

- AI Accelerating Sciences: Neural operators for Learning Between Function Spaces

- Deep learning surrogate models have shown promise in modeling complex physical phenomena such as fluid flows, molecular dynamics, and material properties. However, standard neural networks assume finite-dimensional inputs and outputs, and hence, cannot withstand a change in resolution or discretization between training and testing. We introduce Fourier neural operators that can learn operators, which are mappings between infinite dimensional function spaces. They are discretization-invariant and can generalize beyond the discretization or resolution of training data. When applied to modeling weather forecasting, Carbon Capture and Storage (CCS), material plasticity and many other processes, neural operators capture fine-scale phenomena and have similar skill as the gold-standard numerical weather models, while being 4-5 orders of magnitude faster.

- YouTube Recording | Talk Slides

- Daniel Goldman, Professor, Georgia Tech

- Friday, October 14, 2022, 2:00pm–3:00pm, MIT Kolker room (26-414)

- Robophysics: robotics meets physics

- Robots will soon move from the factory floor and into our lives (e.g. autonomous cars, package delivery drones, and search-and-rescue devices). However, compared to living systems, robot capabilities in complex environments are limited. I believe the mindset and tools of physics can help facilitate the creation of robust self-propelled autonomous systems. This “robophysics” approach – the systematic search for novel dynamics and principles in robotic systems – can aid the computer science and engineering approaches which have proven successful in less complex environments. The rapidly decreasing cost of constructing sophisticated robot models with easy access to significant computational power bodes well for such interactions. And such devices are valuable as models of living systems (complementing theoretical and computational modeling approaches) and can lead to development of engineered devices that begin to achieve life-like locomotor abilities on and within complex environments. They also provide excellent tools to study questions in active matter physics. In this talk, I will provide examples of the above using recent studies in which concepts from modern physics–geometric phase and mechanical “diffraction” – have led to new insights into biological and engineered locomotion across scales.

- Slides and recording not available.

- Lucy Colwell, Associate Professor, Cambridge University

- Friday, September 16, 2022, 2:00pm–3:00pm, MIT Kolker Room (26-414)

- Data-driven ML models that predict protein function from sequence

- The ability to predict protein function directly from sequence is a central challenge that will enable the discovery of new proteins with specific functional properties. The evolutionary trajectory of a protein through sequence space is constrained by its functional requirements, and the explosive growth of evolutionary sequence data allows the natural variation present in homologous sequences to be used to infer these constraints and accurately predict protein function. However, state-of-the-art alignment-based techniques cannot predict function for one-third of microbial protein sequences, hampering our ability to exploit data from diverse organisms. I will present ML models that accurately predict the presence and location of functional domains within protein sequences. These models have recently added hundreds of millions of annotations to public databases that could not be made using existing approaches. A key advance is the ability for models to extrapolate and thus to be able to accurately predict functional annotations that were not seen during model training. To address this challenge we draw inspiration from recent advances in natural language processing to train models that translate between amino acid sequences and functional descriptions. Finally, I will illustrate the potential of active learning to discover new sequences through the design and experimental validation of proteins and peptides for therapeutic applications.

- YouTube Recording

Spring 2022

- Laura Waller, Associate Professor, EECS, University of California, Berkeley

- Friday, April 29, 2022, 2:00pm–3:00pm, Virtual

- Computational Microscopy

- Computational imaging involves the joint design of imaging system hardware and software, optimizing across the entire pipeline from acquisition to reconstruction. Computers can replace bulky and expensive optics by solving computational inverse problems, or images can be reconstructed from scattered light. This talk will describe new microscopes that use computational imaging to enable 3D, aberration and phase measurement using simple hardware that is easily adoptable and advanced image reconstruction algorithms based on large-scale optimization and learning.

- YouTube Recording

- Hiranya Peiris, Professor of Astrophysics, University College London; Professor of Cosmoparticle Physics at the Oskar Klein Centre, Stockholm

- Friday, April 15, 2022, 2:00pm–3:00pm, Virtual

- Prospects for understanding the physics of the Universe

- The remarkable progress in cosmology over the last decades has been driven by the close interplay between theory and observations. Observational discoveries have led to a standard model of cosmology with ingredients that are not present in the standard model of particle physics – dark matter, dark energy, and a primordial origin for cosmic structure. Their physical nature remains a mystery, motivating a new generation of ambitious sky surveys. However, it has become clear that formidable modeling and analysis challenges stand in the way of establishing how these ingredients fit into fundamental physics. I will discuss progress in harnessing advanced machine-learning techniques to address these challenges, giving some illustrative examples. I will highlight the particular relevance of interpretability and explainability in this field.

- YouTube Recording | Talk Slides

- Yann LeCun, VP and Chief AI Scientist, Meta

- Friday, April 1, 2022, 2:00pm–3:00pm, Virtual

- A path towards human-level intelligence

- In his talk, Yann LeCun will discuss a path towards human-level intelligence, drawing on his extensive expertise in the field of artificial intelligence and deep learning.

- YouTube Recording | Talk Slides

- Giuseppe Carleo, Assistant Professor, Computational Quantum Science Laboratory, École Polytechnique

- Friday, March 4, 2022, 2:00pm–3:00pm, Virtual

- Neural-Network Quantum States: new computational possibilities at the boundaries of the many-body problem

- Machine-learning-based approaches, routinely adopted in cutting-edge industrial applications, are being increasingly adopted to study fundamental problems in science. Many-body physics is very much at the forefront of these exciting developments, given its intrinsic ‘big-data’ nature. In this talk, I will present selected applications to the quantum realm, discussing how a systematic and controlled machine learning of the many-body wave-function can be realized, and how these approaches outperform existing state-of-the-art methods in various domains.

- YouTube Recording | Talk Slides

- Kyle Cranmer, Professor of Physics and Data Science, New York University; Visiting Scientist at Meta AI

- Friday, February 18, 2022, 2:00pm–3:00pm, Virtual

- Vignettes in physics-inspired AI research

- Distinct from pure basic research and pure applied research is the concept of use-inspired research. The claim is that foundational advances are often inspired by the context and particularities of a specific applied problem setting – reality is stranger than fiction. I will give a few examples of advances in AI inspired by problems in physics, which have also been found to be useful in unexpected areas ranging from algorithmic fairness, genomics, and epidemiology.

- YouTube Recording | Talk Slides

Fall 2021

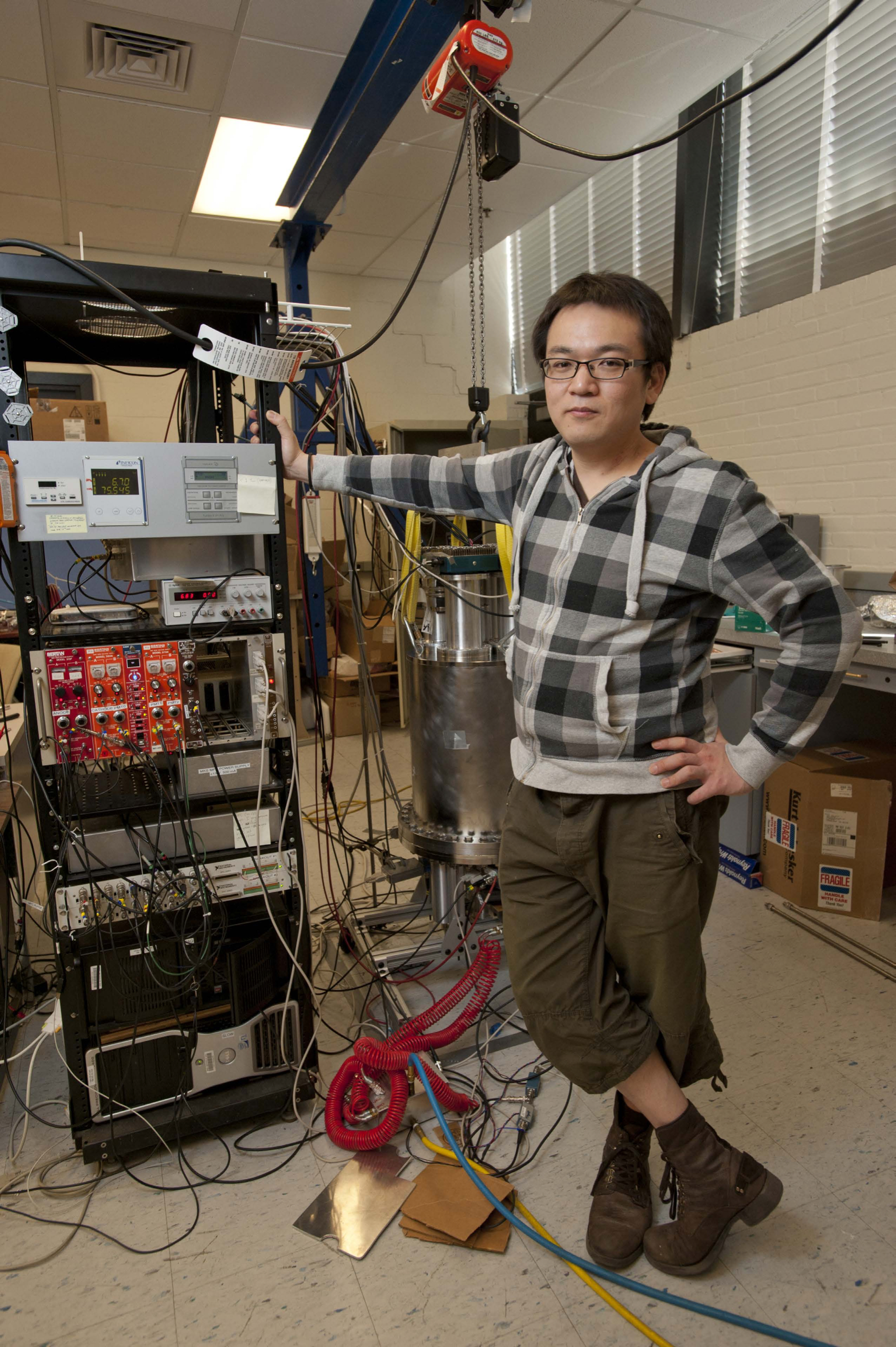

- Kazuhiro Terao, Staff Scientist, SLAC National Accelerator Laboratory, Stanford University, SLAC National Accelerator Laboratory

- Friday, December 10, 2021, 2:00pm–3:00pm, Virtual

- Machines to find ghost particles in big data

- The neutrino is the most mysterious of the standard model particles. They only interact weakly and can pass through light years of matter without leaving a trace. A new generation of neutrino experiments is coming online to address questions related to neutrinos in the era of high-precision measurements. These experiments use Liquid Argon Time Projection Chambers (LArTPC) as detectors capable of recording images of charged particle tracks with high resolution. Analyzing high-resolution imaging data can be challenging, requiring the development of many algorithms to identify and assemble features of the events in order to reconstruct neutrino interactions. In this talk, we will discuss the challenges of data reconstruction for imaging detectors and recent work using deep neural networks (DNNs) for data reconstruction in LArTPC experiments.

- YouTube Recording | Talk Slides

- Yasaman Bahri, Research Scientist, Google Research, Brain Team, Google Research

- Friday, November 12, 2021, 2:00pm–3:00pm, Virtual

- Understanding deep learning

- Deep neural networks are a rich class of function approximators that are now ubiquitous in many domains and enable new frontiers in physics and other sciences, but their function, limitations, and governing principles are not yet well-understood. In this talk, we will overview results from a research program seeking to understand deep learning scientifically. These investigations draw ideas, tools, and inspiration from theoretical physics, with close guidance from computational experiments and integrate together perspectives from computer science and statistics. We will discuss some past highlights from the study of overparameterized neural networks, such as exact connections to Gaussian processes and linear models, as well as information propagation in deep networks, and focus on emerging questions surrounding the role of scale (so-called “scaling laws”) and predictability.

- YouTube Recording | Talk Slides

- Sven Krippendorf, Senior Researcher, Mathematical Physics and String Theory, Ludwig-Maximilians Universität

- Friday, October 29, 2021, 2:00pm–3:00pm, Virtual

- Theoretical Physicists’ Biases Meet Machine Learning

- Many recent successes in machine learning (ML) resemble the success story in theoretical particle physics of utilizing symmetries as an organizing principle. In this talk, we discuss an introductory example where this procedure applied in ML leads to new insights into PDEs in mathematical physics. We also discuss methods for identifying symmetries of a system without requiring prior knowledge about such symmetries, including how to find a Lax pair/connection associated with integrable systems. Additionally, we explore how latent representations in neural networks can offer a close resemblance to variables used in dual descriptions established analytically in physical systems.

- YouTube Recording | Talk Slides

- Rose Yu, Assistant Professor, Computer Science and Engineering, UC San Diego

- Friday, October 15, 2021, 2:00pm–3:00pm, Virtual

- Physics-Guided AI for Learning Spatiotemporal Dynamics

- Applications such as public health, transportation, and climate science often require learning complex dynamics from large-scale spatiotemporal data. While deep learning has shown tremendous success in these domains, it remains a grand challenge to incorporate physical principles in a systematic manner to the design, training, and inference of such models. In this talk, we will demonstrate how to principally integrate physics in AI models and algorithms to achieve both prediction accuracy and physical consistency. We will showcase the application of these methods to problems such as forecasting COVID-19, traffic modeling, and accelerating turbulence simulations.

- YouTube Recording | Talk Slides

- Ben Wandelt, Director, Lagrange Institute

- Friday, October 1, 2021, 2:00pm–3:00pm, Virtual

- Learning the Universe

- To realize the advances in cosmological knowledge we desire in the coming decade will require a new way for cosmological theory, simulation, and inference to interplay. As cosmologists, we wish to learn about the origin, composition, evolution, and fate of the cosmos from all accessible sources of astronomical data, such as the cosmic microwave background, galaxy surveys, or electromagnetic and gravitational wave transients. I will discuss simulation-based, full-physics modeling approaches to cosmology powered by new ways of designing and running simulations of cosmological observables and of confronting models with data. Innovative machine-learning methods play an important role in making this possible, with the goal of using current and next-generation data to reconstruct the cosmological initial conditions and constrain cosmological physics much more completely than has been feasible in the past.

- YouTube Recording | Talk Slides

- Surya Ganguli, Associate Professor, Applied Physics, Stanford University

- Friday, September 17, 2021, 2:00pm–3:00pm, Virtual

- Understanding computation using physics and exploiting physics for computation

- We are witnessing an exciting interplay between physics, computation, and neurobiology that spans in multiple directions. In one direction, we can use the power of complex systems analysis, developed in theoretical physics and applied mathematics, to elucidate design principles governing how neural networks, both biological and artificial, learn and function. In another direction, we can exploit novel physics to instantiate new kinds of quantum neuromorphic computers using spins and photons. We will give several vignettes in both directions, including determining the best optimization problem to solve in order to perform regression in high dimensions and describing a quantum associative memory instantiated in a multimode cavity QED system.

- YouTube Recording | Talk Slides

Spring 2021

- Jim Halverson, Assistant Professor, Northeastern

- Thursday, April 29, 2021, 11:00am–12:00pm, Virtual

- Machine Learning and Knots

- Most applications of ML are applied to experimental data collected on Earth, rather than theoretical or mathematical datasets that have ab initio definitions, such as groups, topological classes of manifolds, or chiral gauge theories. Such mathematical landscapes are characterized by a high degree of structure and truly big data, including continuous or discrete infinite sets or finite sets larger than the number of particles in the visible universe. After introducing ab initio data, we will focus on one such dataset: mathematical knots, which admit a treatment using techniques from natural language processing via their braid representatives. Elements of knot theory will be introduced, including the use of ML for various problems that arise in it. For instance, reinforcement learning can be utilized to unknot complicated representations of trivial knots, similar to untangling headphones, but we will also use transformers for the unknot decision problem, as well as treating the general topological problem.

- YouTube Recording | Talk Slides

- Demba Ba, Professor, Harvard

- Thursday, April 15, 2021, 11:00am–12:00pm, Virtual

- Interpretable AI in Neuroscience: Sparse Coding, Artificial Neural Networks, and the Brain

- Sparse signal processing relies on the assumption that we can express data of interest as the superposition of a small number of elements from a typically very large set or dictionary. As a guiding principle, sparsity plays an important role in the physical principles that govern many systems, the brain in particular. In this talk, I will focus on neuroscience. In the first part, I will show how to use sparse dictionary learning to design, in a principled fashion, ANNs for solving unsupervised pattern discovery and source separation problems in neuroscience. In the second part, I will introduce a deep generalization of a sparse coding model that makes predictions as to the principles of hierarchical sensory processing in the brain. Using neuroscience as an example, I will make the case that sparse generative models of data, along with the deep ReLU networks associated with them, may provide a framework that utilizes deep learning, in conjunction with experiment, to guide scientific discovery.

- YouTube Recording | Talk Slides

- Doug Finkbeiner, Professor, Harvard

- Thursday, April 1, 2021, 11:00am–12:00pm, Virtual

- Beyond the Gaussian: A Higher-Order Correlation Statistic for the Interstellar Medium

- Our project to map Milky Way dust has produced 3-D maps of dust density and precise cloud distances, leading to the discovery of the structure known as the Radcliffe Wave. However, these advances have not yet allowed us to do something the CMB community takes for granted: read physical model parameters off of the observed density patterns. The CMB is (almost) a Gaussian random field, so the power spectrum contains the information needed to constrain cosmological parameters. In contrast, dust clouds and filaments require higher-order correlation statistics to capture relevant information. I will present a statistic based on the wavelet scattering transform (WST) that captures essential features of ISM turbulence in MHD simulations and maps them onto physical parameters. This statistic is lightweight (easy to understand and fast to evaluate) and provides a framework for comparing ISM theory and observation in a rigorous way.

- YouTube Recording | Talk Slides

- Phil Harris, Assistant Professor, MIT

- Thursday, March 18, 2021, 11:00am–12:00pm, Virtual

- Quick and Quirk with Quarks: Ultrafast AI with Real-Time systems and Anomaly detection For the LHC and beyond

- With data rates rivaling 1 petabit/s, the LHC detectors have some of the largest data rates in the world. If we were to save every collision to disk, we would exceed the world’s storage by many orders of magnitude. As a consequence, we need to analyze the data in real-time. In this talk, we will discuss new technology that allows us to be able to deploy AI algorithms at ultra-low latencies to process information at the LHC at incredible speeds. Furthermore, we comment on how this can change the nature of real-time systems across many domains, including Gravitational Wave Astrophysics. In addition to real-time AI, we present new ideas on anomaly detection that builds on recent developments in the field of semi-supervised learning. We show that these ideas are quickly opening up possibilities for a new class of measurements at the LHC and beyond.

- YouTube Recording | Talk Slides

- Max Tegmark, Professor, MIT

- Thursday, March 4, 2021, 11:00am–12:00pm, Virtual

- ML-discovery of equations, conservation laws and useful degrees of freedom

- A central goal of physics is to discover mathematical patterns in data. I describe how we can automate such tasks with machine learning and not only discover symbolic formulas accurately matching datasets (so-called symbolic regression), equations of motion and conserved quantities, but also auto-discover which degrees of freedom are most useful for predicting time evolution (for example, optimal generalized coordinates extracted from video data). The methods I present exploit numerous ideas from physics to recursively simplify neural networks, ranging from symmetries to differentiable manifolds, curvature and topological defects, and also take advantage of mathematical insights from knot theory and graph modularity.

- YouTube Recording | Talk Slides

- Pulkit Agrawal, Assistant Professor, MIT

- Thursday, February 18, 2021, 12:30pm–1:30pm, Virtual

- Challenges in Real World Reinforcement Learning

- In recent years, reinforcement learning (RL) algorithms have achieved impressive results on many tasks. However, for most systems, RL algorithms still remain impractical. In this talk, I will discuss some of the underlying challenges: (i) defining and measuring reward functions; (ii) data inefficiency; (iii) poor transfer across tasks. I will end by discussing some directions pursued in my lab to overcome these problems.

- YouTube Recording | Talk Slides

- Phiala Shanahan, Assistant Professor, MIT

- Thursday, February 4, 2021, 11:00am–12:00pm, Virtual

- Ab-initio AI for first-principles calculations of the structure of matter

- The unifying theme of IAIFI is “ab-initio AI”: novel approaches to AI that draw from, and are motivated by, aspects of fundamental physics. In this context, I will discuss opportunities for machine learning, in particular generative models, to accelerate first-principles lattice quantum field theory calculations in particle and nuclear physics. Particular challenges in this context include incorporating complex (gauge) symmetries into model architectures, and scaling models to the large number of degrees of freedom of state-of-the-art numerical studies. I will show the results of proof-of-principle studies that demonstrate that sampling from generative models can be orders of magnitude more efficient than traditional Hamiltonian/hybrid Monte Carlo approaches in this context.

- YouTube Recording | Talk Slides